Whenever you are lost or misguided and realize you’re now treading a losing path, it’s always good to take a break and ponder over why you started on this path in the first place? It’s time to go back to basics and start afresh. However, what’ll you do if you’ve already laid down a wrong foundation and are knee-deep in it?

How’d we get here? What happens usually is that we start off with one destination in mind and as we travel down the road, we encounter lot’s of by-ways, service lanes, highways, cuts, overpasses and tracks to different destinations.

Now, as we keep coming across these, we slow down a lil and start thinking if I should jump on the other road, because most of the traffic is headed that way. You follow that and after covering a lot of the journey to this new destination, you now find yourself stuck in a terrible traffic mess. It’s terrible because it seems the whole city has converged on this dammn road. You’re literally stuck up as you can’t even go back. The nearest exit is many miles away and you have to stay stuck up in this traffic for many hours before you choose to take an exit or carry on with this journey to this destination. Now, seeing the huge traffic ahead and with the intention of doing something different instead of simply following the crowd, you decide to take the exit and head back to the point where you started and go back to the original destination.

On your way back to the diverged point from where you got distracted in the first place, you see a shortcut road promising to take you to your destination quickly instead of you having to traverse all along the main route. The only caveat here is that this lil shortcut track is all dusty and worn out and guarantees you a bumpy ride. You neverthless console yourself to patiently bear it all in the hope of arriving at your destination quickly through the shortcut. As you travel along many miles into this track carefully maneuvring this dark crazy track, you find out to your horror, there is a cul–de–sac because of a huge pit!

You get out of the car, slam the door and bang the car multiple time cursing at yourself and at the lovely green countryside. When you get to your senses, you’re glad that you didin’t actually drive into the pit and kill yourself. Relieved that you’re still alive, you find a new adrenaline rush of energy with you. Now determined to not get distracted by shortcuts and to stay focussed on the destination you turn back in your car and come back on the main highway from where you veneered into this shortcut.

You see a distance sign on the highway indicating your original destination to be only few miles ahead. You get more excited and hit the accelerator! After having driven few miles, your car suddenly puffs and pants and stops. You look into your dashboard and realize you’re out of fuel!

As you come out of your vehicle slamming the door and hurling the choicest abuses to yourself for being so stupid, the Sun quietly sets in the background!

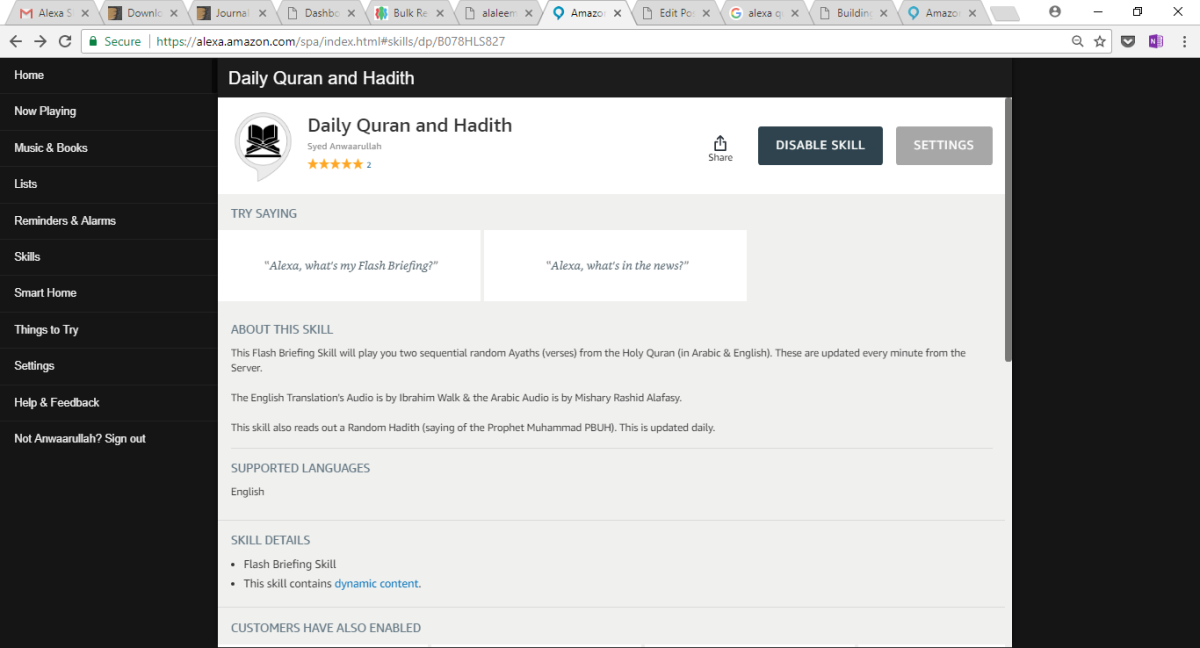

Key features include:

Key features include: